Same model. The one that hallucinates in web chat ships a 200-line feature in one shot inside Claude Code. Codex’s /goal resolves an entire issue end to end. The model did not suddenly get smarter. What changed is the structure.

Why It Works

The loop in conversational AI looks like this:

LLM -> human -> LLM -> human

Every piece of feedback is natural language. Probabilistic generation followed by probabilistic evaluation. Accuracy degrades multiplicatively.

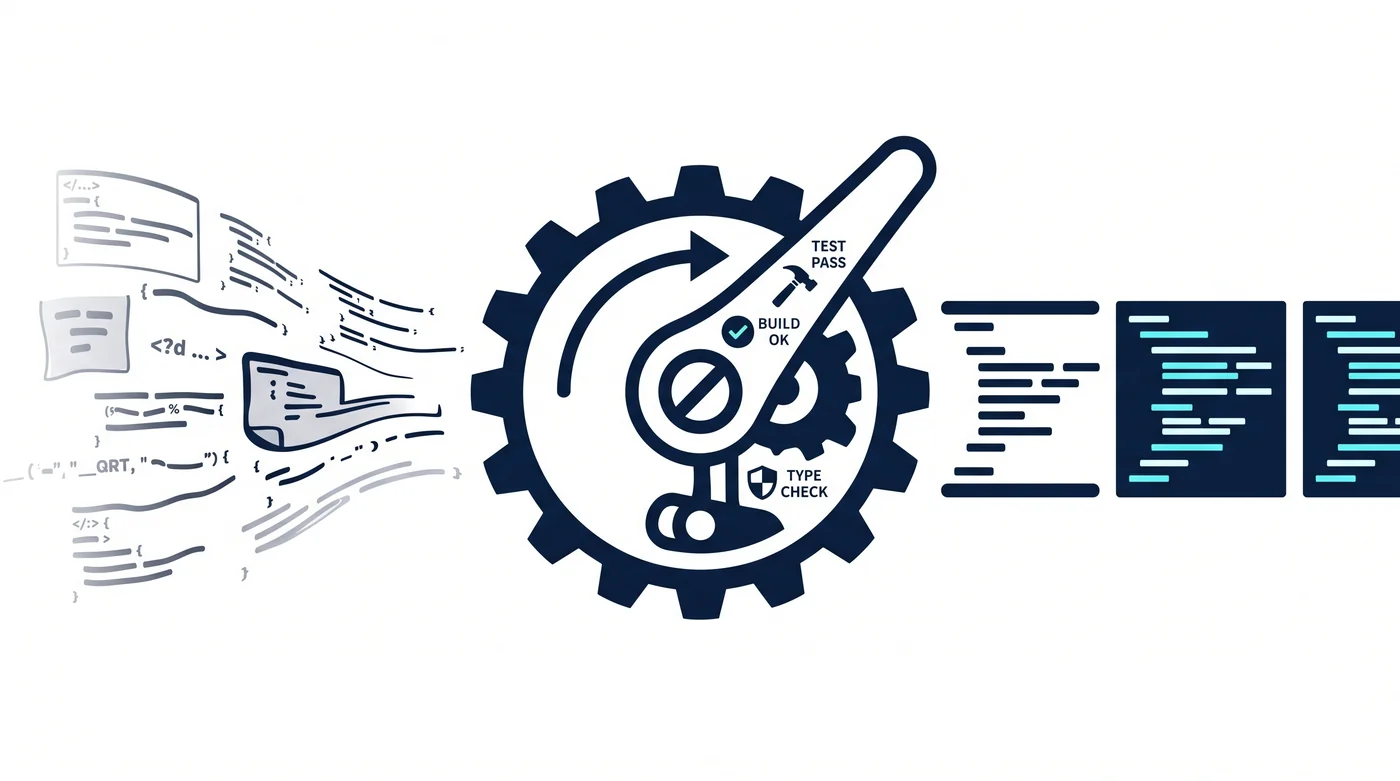

The loop in a coding agent is different:

LLM -> code generation -> file save -> test run -> pass/fail -> LLM

LLM -> code edit -> build -> success/failure -> LLM

LLM -> type check -> error message -> LLM

There is a deterministic gate inside the loop. The filesystem saves exactly what was written. Tests either pass or fail. The compiler says wrong when it is wrong. These act as unintentional ratchets.

An LLM is an unreliable component. But building a reliable protocol on top of unreliable components is fundamental engineering. TCP creates reliable delivery over an unreliable network. RAID creates reliable storage over unreliable disks. ECC creates reliable computation over unreliable memory.

Coding agents work for the same reason. They layer deterministic verifiers – tests, builds, linters, type checkers – on top of an unreliable LLM. The cause of success is not model performance. It is topology.

Then Why Does It Break?

I said it works. Yet it often breaks. Why?

Because a ratchet that happens to be there and a ratchet that is deliberately designed are very different things.

There Are Gaps Without Ratchets

What happens when the agent modifies code that has no tests? The build passes, the lint passes, but the feature is broken. In segments with no deterministic gate, the LLM judges probabilistically, and probabilistic judgments degrade multiplicatively.

Out of 200 endpoints, 180 have tests and 20 do not. The agent handles the 180 flawlessly and silently plants bugs in the 20. That is why you get “it is almost done but something feels off.”

Feedback Lacks Information Density

I ran an experiment sorting 1,000 words. A CPU finished in 0.08ms at 100%. The LLM took 438 seconds at 97.7%. That alone is remarkable – 97.7% through pure cognition. But the real discovery was elsewhere.

I varied only the level of feedback on the same result:

| Feedback | Result |

|---|---|

| None | 6 errors (99.4%) |

| “There are errors” | 10 errors (99.0%) – worse |

| “There are 23 errors” | 1 error (99.9%) |

| “6 errors, here they are” | 0 errors (100%) |

Telling the model only that something is wrong triggers over-correction and makes things worse. Giving it the error count creates a target, so it hunts persistently. Giving it the locations lets it fix everything perfectly.

Most agents today are stuck at the second level. When a test fails, they know “something is wrong,” but they do not convey the structural reason why. Error messages exist, but they describe symptoms, not causes.

Blind Spots Exist, and Repetition Does Not Fix Them

In the sorting experiment, the LLM left 6 errors in R2. In R3 it reported “no errors.” In R4b it reported “no errors” again. It missed the same 6 in the same way every time.

Without hints, accuracy converged at 99.4% no matter how many retries. Only when told “6 remain” did it finally reach 100%.

The same thing happens with coding agents. The agent introduces a bug, judges “no issues” in self-review, and when asked to fix it again, misses the same spot. This is why retry is not a solution. Blind spots are a structural limitation arising from the model’s probabilistic nature, not a lack of effort.

Multiplication Kicks In at Scale

Chain two steps at 97.7% accuracy and you get 0.977^2 = 95.4%. Three steps: 93.2%. Ten steps: 79.2%.

An agent edits a single file well. But ask it to refactor across 100 files? Even at 97% per step, 100 steps yield 0.97^100 = 4.8%. Failure is virtually guaranteed.

This is the mathematical explanation of “vibe coding breaks at 200 endpoints.” In small projects, the number of chained steps is low enough for the odds to hold. In large projects, multiplication works catastrophically.

What Is Needed

The reason it works and the reason it breaks point to the same thing: the presence or absence of deterministic verification gates.

Today’s agents rely on ratchets that happen to be there – tests, builds, linters. Design them deliberately, and they get stronger.

What it means to design ratchets deliberately:

First, identify the gaps without ratchets. Code without tests, APIs without schemas, data without types. Every place the agent judges probabilistically is a vulnerability.

Second, increase the information density of feedback. Returning only pass/fail induces over-correction. Convey “where, why, and what diverges from expectation” in a structured way.

Third, insert deterministic gates between chained steps. Running 10 steps at once makes multiplication catastrophic, but locking each step with a ratchet resets the degradation.

An LLM is a remarkable generator. It sorts 1,000 words at 97.7% accuracy through pure reasoning – something even humans cannot do. But anything less than 100% collapses under repetition. 0.977 squared is 0.954.

Coding agents work not because the model is smart, but because there is a deterministic gate inside the loop. They break because that gate is missing.

Generation can be probabilistic. Verification must be deterministic.

Related Posts

- Ratchet Pattern — How to Make Agents Finish the Job — Structure and principles of the ratchet pattern

- Model IQ Matters Less Than Feedback Topology — The structure of feedback determines performance

- Constraints Are Contracts — Rational constraints make systems free

- filefunc — One File, One Concept — LLM-native code structure

- First Principles AI Thinking — How to think with AI